AI Analyst: Advancing the SOC

![]() Ádám Karóczi

Ádám Karóczi

![]() 2026.04.10

2026.04.10

Security Operations Centers are under growing pressure. IT environments are becoming more complex, while SOC teams rarely receive matching increases in resources. They must monitor more technologies, process more data, and assess more alerts in less time to determine what is a real threat and what is only noise.

Over the past few years, the response has evolved from automation to AI assistants and now toward agentic AI. The question is no longer whether AI belongs in the SOC, but how it can deliver real operational value.

Why traditional SOC operations are no longer enough

Today’s SOCs face several connected problems. The volume of data and events keeps growing, many organizations run more than 40 security and IT tools in parallel, alert overload and false positives remain common, cloud migration and automation create additional pressure, qualified experts are hard to find and retain, analysts burn out quickly, and key functions such as threat hunting or CTI processing are often missing.

Attackers are also using AI more effectively, which increases the pressure on defenders to adopt more advanced capabilities.

The first phase: automation and its limits

A major early step in SOC development was automation through SOAR systems. The goal was to automate parts of incident handling and, in some cases, response actions.

In practice, this proved difficult to scale. Fully automated intervention is often not acceptable for business, operational, or risk reasons. On top of that, each incident type usually requires its own playbook to be created, maintained, and refined.

As a result, SOAR has often become more of an analysis and process support tool than a fully autonomous incident management engine.

The next phase: AI assistants

The next phase introduced assistant-style AI. These tools let analysts ask questions in natural language, request summaries, gather information, and get support in interpreting a situation.

This is useful, but the human still drives the process. The analyst must ask the right questions, interpret the answers, and continue the investigation. In complex cases, that still requires strong expertise.

The next step: agentic AI in the SOC

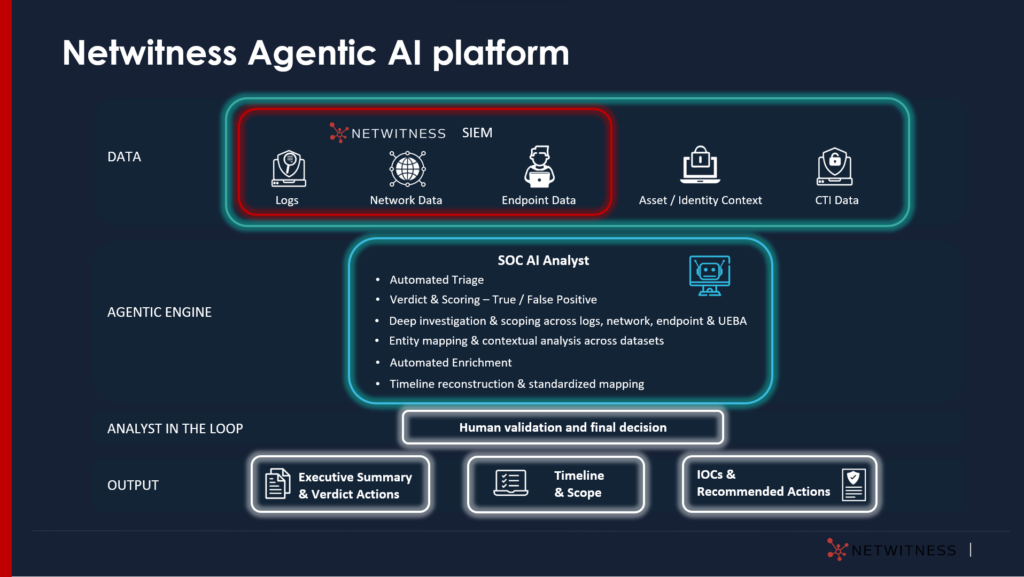

Agentic AI goes beyond question-and-answer support. It uses software agents built on large language models (LLMs) that incorporate domain expertise, methodology, and operational logic.

Such a system can gather information, build context, identify connections, create a narrative, and recommend next steps. At the same time, the analyst remains in control and can pause the process, add context, or override conclusions.

This human-in-the-loop model is essential. The goal is not to replace analysts, but to reduce repetitive and time-consuming work while keeping human validation and decision-making in place.

AI Analyst in practice: the NetWitness platform

A practical example of this approach is an AI Analyst-type solution that reviews SIEM-generated incidents and recommends whether further action is needed.

Its strength is that it does not rely only on the alert itself. A meaningful investigation also needs to consider what happened on the affected host before and after the event, which user was linked to the activity, how the relevant process or service was launched, whether downloads, privilege escalation, or lateral movement occurred, and whether similar activity appeared elsewhere in the environment.

AI Analyst follows the same logic. It uses the SIEM as a broader data source and can collect information from logs, network data, endpoint telemetry, and supporting internal or external context such as asset, identity, or CTI data.

Why broader context matters

In many incidents, the alert alone is not enough to support the right decision. For example, communication with a known malicious IP does not automatically prove a compromise.

A reliable assessment may require understanding which process initiated the communication, under which user it ran, what triggered it, whether related downloads or lateral movement occurred, and whether similar activity appears in other systems.

Agentic AI adds value here because it can connect entities and activities across multiple data sources, helping reveal correlations that traditional rule-based logic may miss.

More than a verdict: a meaningful incident report

The real value of these systems is not only deciding whether an event is a true or false positive, but also producing the context needed for action.

A structured AI-generated incident report may include a narrative of the likely attack chain, the relationships between affected systems and entities, relevant IOCs, a timeline, the scope of the incident, recommended next steps, and a final assessment of whether intervention is required.

This helps analysts make faster decisions while also supporting reporting, traceability, and auditability.

Beyond individual use cases: a broader approach

One of the key advantages of agentic AI is that it does not always need a separate workflow for every incident type. If the system combines analytical methods, industry practices, and the organization’s expertise, it can provide useful recommendations even in previously unseen situations.

That is a major difference from strictly playbook-driven operations.

Data protection and European compliance in AI deployment

SOC data often includes personal or personally identifiable information, so confidentiality and compliance are central issues in AI adoption.

In Europe, this is especially sensitive. Any viable solution must therefore be strong not only in performance and accuracy, but also in data handling. Anonymization, on-premise logical controls, and GDPR-compliant operation are basic requirements.

Practical impact

The practical benefits appear on several levels. Analysts spend less time gathering raw information and more time validating findings and making decisions. Structured reports speed up decision-making and help reduce backlog, allowing teams to focus more on higher-risk cases.

According to the presented findings, traditional investigation time averaged 20–45 minutes, while AI-assisted processing generated an investigation report in 2–3 minutes. This does not remove the need for human expertise, but it changes how analyst time is used.

People and AI working together

The future SOC is unlikely to be fully autonomous. A more realistic model is a hybrid one in which different agents support analysts, threat hunters, CTI functions, and even management reporting.

These agents do not work in isolation. They collaborate, share context, and strengthen each other’s outputs.

Why AI Analyst is worth implementing

AI Analyst is not just another AI feature. It is an agentic AI capability that helps organizations investigate incidents faster, more accurately, and with less manual effort while keeping decision-making under human control.

Its business value is clear: it reduces analyst workload, shortens investigation time, lowers backlog, improves false-positive filtering, and produces standardized reports. For organizations seeking a scalable, production-ready, and technically sound AI capability in the SOC, this offers a clear operational advantage.

If the goal is not only to handle more alerts but to validate more real threats in less time, AI Analyst is already a practical solution.

Read the full article on our International subsidiary’s website by clicking on the logo: